The zone of proximal depravity

In the digital era it’s always a difficulty to discern the difference between behaviours that have always been present, but we just notice them differently, and those that are fundamentally changed by the digital environment. Often it’s a bit of both. One such aspect I’ve been thinking about recently is our exposure as individuals to extreme views. It’s one where I think the digital world has caused a significant shift for all of us.

In the analogue world, it’s been usually pretty easy not to find yourself exposed to extreme views, or targeted by extremists, or caught in the middle of a conversation with fascists, terrorists, conspiracy theorists, and assorted undesirables. This was because you can usually spot them, and also because conversations go through stages, it rarely goes, “hello, pleased to meet you, let me tell you about white supremacy”. I remember once I was travelling to Germany in the 80s via coach. I was on the ferry with a group of construction workers (it was Auf Wiedersehen, Pet time), and I got drinking and chatting with them. One of them was clearly intelligent and amiable and we chatted over a couple of Stellas. And then from a conversation about football, he was telling me about the global Jewish conspiracy, the research he had done, how they controlled everything, etc. Like many conspiracy devotees, he was intelligent and erudite, but completely monomaniacal and twisted. The reason I remember this encounter is because it was a failure of my radar. You get blokes (and it’s usually a bloke) like this in the pub, but you quickly detect it, either in their aggressive tone or the direction of the conversation and move away. But in this case I had been caught out and found myself in the middle of this guy’s derangement before I knew where I was.

I raise this memory because it is now what we all face on a daily basis. Mike Caulfield gives an intriguing and terrifying account of how quickly the algorithm on Pinterest takes you from recipes to full on conspiracy theory and fake news:

It is this way in which algorithms now actively seek to bypass our previous defences against extremism that is new. For example, Facebook’s “pages you may like” algorithm suggest Britain First to me (I mean, wtf?). Or the time my daughter came downstairs visibly shaken because a misogynistic video from Milo had popped up in her timeline and not knowing who he was, she had watched it. Or when a friend of a friend on FB decided to dump an offensive meme in the middle of a conversation. You’ll ALL have similar examples, and they happen every day.

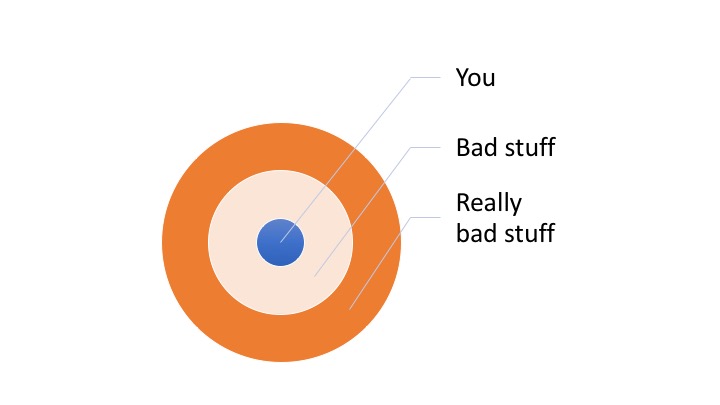

To borrow slightly tongue in cheek from Vygostky, we can think of this as a collapse in our zone of proximal depravity. Before you get to the real zealots and extremists, you had to go through a layer of protection and increasing signals. You rarely got embedded in it by accident:

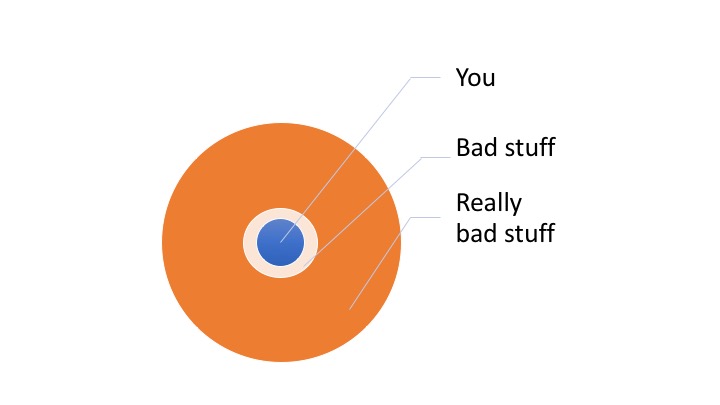

But what the algorithmic feed does is effectively collapse this protective layer, so our previous signals, defensive mechanisms and means of establishing distance are no longer effective. So now it’s just a thin membrane:

There are implications for this. For the individual I worry about our collective mental health, to be angry, to be made to engage with this stuff, to be scared and to feel that it is more prevalent than maybe it really is. For society it normalises these views, desensitises us to them and also raises the emotional temperature of any discussion. One way of viewing digital literacy is reestablishing the protective layer, learning the signals and techniques that we have in the analogue world for the digital one. And perhaps the first step in that is in recognising how that layer has been diminished by algorithms.

2 Comments

Andrew Smith

“let me tell you about my conspiracy theory” … ok, only joking … however what you share as a semi vygotsky’esque notion isn’t too far from thinking around the social media algorithms becoming a messy amplification of cognitive bias … (take a peek at … https://betterhumans.coach.me/cognitive-bias-cheat-sheet-55a472476b18).

We are all biased, however – now our zones are not only being eroded by bad stuff and really bad stuff … we are seeing biased and very biased. Unless self reflection and critical thinking is taught – teaching everyone to critically analyse what is said and who is saying it (as well as why did that appeal to me) then sadly I think we are only going to see more of this. Relegating the truth to the domain of fake news.

Meanwhile have a great Christmas and thanks for listening to my rant/conspiracy 😉

Clint Lalonde

I love this riff on Vygotsky, and the connection to the importance of digital literacy. Thanks for capturing the level of angst that I know I feel more and more these days. If there is one thing we have learned over the years is that networks amplify, for better or for worse. These days, it does feel like the for worse is getting louder and harder to filter out. Filter failure indeed. Hard to filter when it feels the filters are being rigged to work against you.